Vorlesungen über Gastheorie, Ludwig Boltzmann (1898) vol.Vorlesungen über Gastheorie, Ludwig Boltzmann (1896) vol.Ludwig Boltzmann: the Man who Trusted Atoms, Oxford University Press, Oxford UK, ISBN 9780198501541, p. "Über die Mechanische Bedeutung des Zweiten Hauptsatzes der Wärmetheorie". However, the absolute value of the entropy of a physical system and the more general creative meaning and value of entropy were not clearly defined and explained by Clausius, which made the Clausius entropy a little mysterious and speculative. This article incorporates text from this source, which is available under the CC BY 3.0 license. Translation of Ludwig Boltzmann’s Paper “On the Relationship between the Second Fundamental Theorem of the Mechanical Theory of Heat and Probability Calculations Regarding the Conditions for Thermal Equilibrium” Sitzungberichte der Kaiserlichen Akademie der Wissenschaften. ^ Max Planck (1914) The theory of heat radiation equation 164, p.119.

Eric Weisstein's World of Physics (states the year was 1872). ^ See: photo of Boltzmann's grave in the Zentralfriedhof, Vienna, with bust and entropy formula.As far as a formula for entropy, well there isn’t just one. Since entropy is primarily dealing with energy, it’s intrinsically a thermodynamic property (there isn’t a non-thermodynamic entropy). The probability distribution of the system as a whole then factorises into the product of N separate identical terms, one term for each particle and when the summation is taken over each possible state in the 6-dimensional phase space of a single particle (rather than the 6 N-dimensional phase space of the system as a whole), the Gibbs entropy First it’s helpful to properly define entropy, which is a measurement of how dispersed matter and energy are in a certain region at a particular temperature. The Boltzmann entropy is obtained if one assumes one can treat all the component particles of a thermodynamic system as statistically independent. This is exact for an ideal gas of identical particles that move independently apart from instantaneous collisions, and is an approximation, possibly a poor one, for other systems. The term Boltzmann entropy is also sometimes used to indicate entropies calculated based on the approximation that the overall probability can be factored into an identical separate term for each particle-i.e., assuming each particle has an identical independent probability distribution, and ignoring interactions and correlations between the particles.

The second, based on the fact that entropy is a state function, uses a thermodynamic cycle similar to those discussed previously. In every situation where equation ( 1) is valid,Įquation ( 3) is valid also-and not vice versa.īoltzmann entropy excludes statistical dependencies The first, based on the definition of absolute entropy provided by the third law of thermodynamics, uses tabulated values of absolute entropies of substances. That is, equation ( 1) is a corollary ofĮquation ( 3)-and not vice versa.

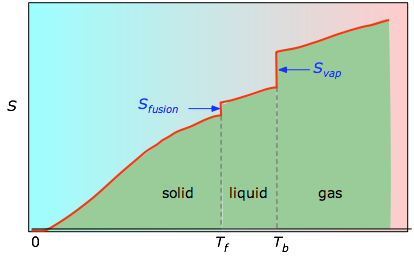

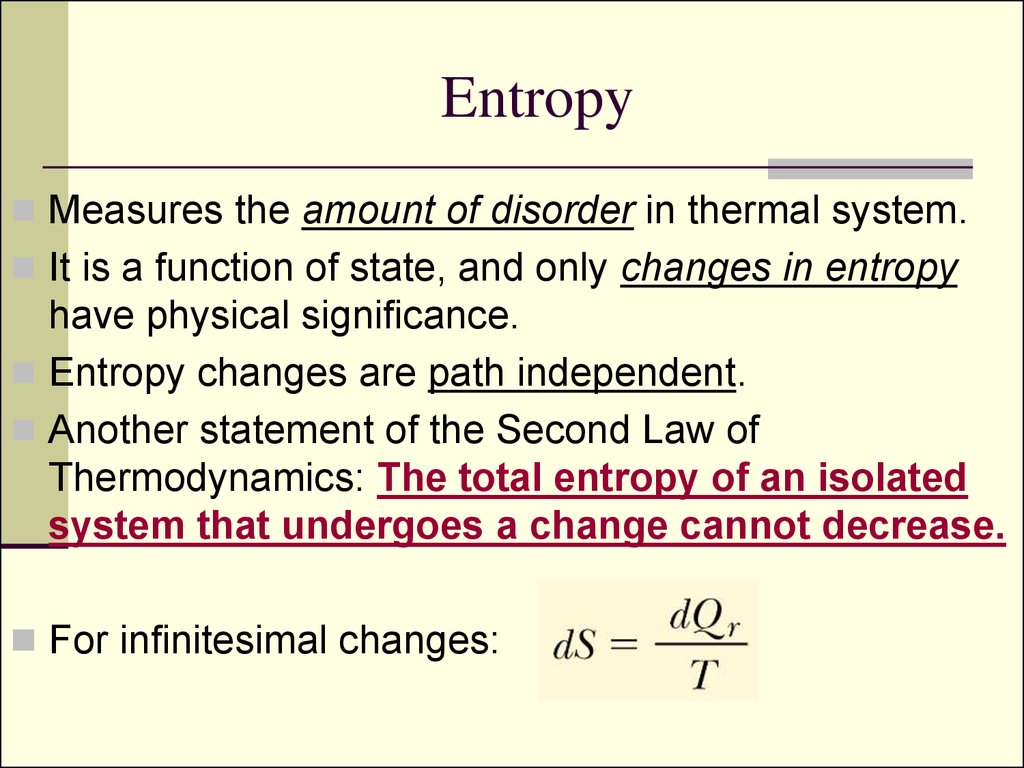

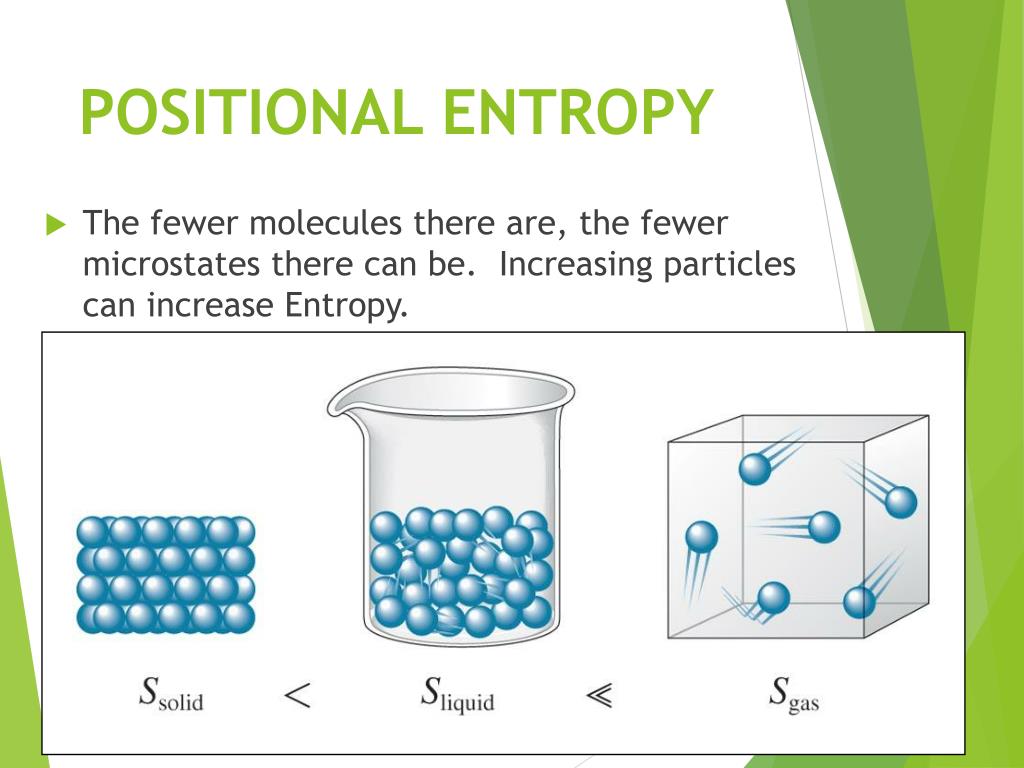

Gibbs gave an explicitly probabilistic interpretation in 1878.īoltzmann himself used an expression equivalent to ( 3) in his later work and recognized it as more general than equation ( 1). He interpreted ρ as a density in phase space-without mentioning probability-but since this satisfies the axiomatic definition of a probability measure we can retrospectively interpret it as a probability anyway. Here we further explore the nature of this state function and define it mathematically.Where k B formula as early as 1866. In Chapter 13, we introduced the concept of entropy in relation to solution formation. To help explain why these phenomena proceed spontaneously in only one direction requires an additional state function called entropy (S), a thermodynamic property of all substances that is proportional to their degree of "disorder". Moreover, the molecules of a gas remain evenly distributed throughout the entire volume of a glass bulb and never spontaneously assemble in only one portion of the available volume. For example, after a cube of sugar has dissolved in a glass of water so that the sucrose molecules are uniformly dispersed in a dilute solution, they never spontaneously come back together in solution to form a sugar cube. For a full video: see Thus enthalpy is not the only factor that determines whether a process is spontaneous. In mathematical statistics, the KullbackLeibler (KL) divergence (also called relative entropy and I-divergence 1 ), denoted, is a type of statistical distance: a measure of how one probability distribution P is different from a second, reference probability distribution Q. When water is placed on a block of wood under the flask, the highly endothermic reaction that takes place in the flask freezes water that has been placed under the beaker, so the flask becomes frozen to the wood. The reaction of barium hydroxide with ammonium thiocyanate is spontaneous but highly endothermic, so water, one product of the reaction, quickly freezes into slush.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed